Azure Data Factory and Azure Data Lakes for Enterprise

Is your enterprise business set up to take advantage of the Big Data revolution?

Thanks to the confluence of the internet, cloud computing, and the Internet of Things, more data is generated per second today than in entire decades previously (to the tune of 1.7MB of data for every person on earth)—it’s estimated that 90% of the world’s total data has been generated within the past two years.

Enterprise businesses with their finger on the pulse of their data now have a massive competitive advantage—as long as they have the infrastructure in place to load, store, and analyze that data.

But it’s not as simple as just collecting the data you have available and throwing a data scientist or two at it (if you could even find two to hire). Enterprise businesses are a collection of tools, business units, and departments, all of which are capable of generating valuable data, but rarely in a standardized format.

Billing and invoicing software, CRM software, inventory management, manufacturing, logistics and fleet management, retail point-of-sale—all are systems which generate data that will provide enterprise businesses with a holistic understanding of their products, employees, and customers.

And while some of these systems may integrate with and speak to each other, rarely is the data generated unified in a way for easy grouping, analysis, and reporting.

Enter Azure Data Services—comprised of the Azure Data Factory, and Azure Data Lakes, these services provide a means for enterprise businesses to unify and standardize their disparate data sources for analysis and processing. By using these services in conjunction, enterprise businesses can analyze the wealth of data at their disposal, and extract meaningful insights that can dramatically impact operations efficiency, boost profitability, and identify opportunities for new markets, products, or service delivery—here’s how:

Enter the Azure Data Factory

Azure Data Factory is a fully managed cloud service that connects diverse data pipelines (such as Azure Blob, Azure SQL Database, Azure Table, On-premises SQL Server Database, Azure DocumentDB, On-premises File System, web applications, FTP servers, etc.) with diverse processing techniques. This allows data developers to load, transform and shape the data (join, aggregate, cleanse, enrich) for consumption by business intelligence tools.

Azure Data Lake is the central data repository where the data is then stored for further analysis and transfer. Capable of storing and analyzing petabyte-sized files (that’s a million gigabytes, in case you were wondering) and trillions of objects, Azure Data Lake is a scalable cloud-based storage solution specifically designed to run Big Data workloads. Capable of interacting with Azure SQL Data Warehouse, Power BI, and Data Factory, Azure Data Lake is powering Big Data Analytics and storage for some of the most successful enterprises in the world.

Once the data is collected in a central data repository (Azure Data Lake), it can be transformed and enriched via batch processing. This usually takes the form of solutions such as Azure Data Lake Analytics, Azure HDInsight operations (such as Hive or Spark SQL), and Machine Learning operations. Because big data sets are so large, files must be processed using long-running batch jobs to prepare files for the next stage: data analysis.

Once data has been enriched and processed, it can be pushed to an analytical data store to be queried by analytical tools. This can be a relational data warehouse, such as the fully managed Azure SQL Data Warehouse, or an interactive Hive database.

Read more about How Microsoft Azure Powers Global Business

Finally, the data is sent to an interface for analysis and reporting by end-users, such as Power BI. The entire purpose of Big Data is to help drive meaningful business decisions by putting data in the hands of the people who need it and making the data accessible and digestible to the average employee; self-service business intelligence tools, featuring reporting and visualization designed to empower the end-user, are essential to making this happen.

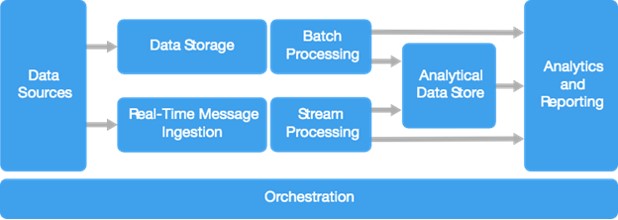

An effective big data architecture framework consists of complex data processing operations and workflows constantly aggregating data from multiple sources, loading it into a data store, applying operations that transform and enrich the data, and making it accessible via reporting and analytics tools to the members of any enterprise organizations.

By utilizing a fully managed orchestration framework such as Azure Data Factory alongside Azure Data Lake, enterprise businesses can automate much of the legwork that goes into building and maintaining these architectures to ensure they’re able to take advantage of the wealth of data available to them.

Azure Data Factory and Azure Data Lakes are an essential component of any enterprise big data architecture framework.

At OnActuate, we’re ready to take your global enterprise to the next level in data management with the Azure Data Factory and Azure Data Lakes. Our team knows the value of your data and will improve your ability to capitalize on the technology available and to transform the way you deal with the exponential growth of your data. Contact us today to learn more!